-

Adrian Michalak-Paulsen og Paul Chaffey

Do We Need a New Official Instructions for Studies? A Small Study

Does the state need a new set of Official Instructions for Studies (Utredningsinstruksen)? A welcome debate has emerged in the pages of Stat & Styring on this question, along with several important subtopics.

Is the current Instructions for Studies a good enough tool for making decisions about measures, including when the problems are cross-sectoral, complex, or digital? And can the current Instructions, with its associated guidance documents, provide the professional knowledge base and contextual understanding required?

Let us begin with our own starting point. We believe the six questions in the current Instructions are quite good, and we see no pressing reason to replace them with different questions. However, we find that the toolbox and guidance accompanying the Instructions are incomplete and better suited to certain types of problems and contexts than others. We therefore believe it would be sensible to develop a more comprehensive package of methods and tools to supplement the guidance for socio-economic analyses and established processes for assessing legal and principled consequences of measures.

In particular, we see a need for better methods and tools for working with complex and cross-sectoral problems, where the direct link between cause and effect is not always possible to determine in advance or clearly delineate, but must instead be explored and tested in other ways. Sometimes the most important thing is to ask good questions about the measure itself—but what is the path to get there? Large and cross-cutting digital projects also explore, test, and iterate in ways that differ from the more linear approach of the Instructions. Therefore, good questions must be supported by better approaches for answering them.

Applying the Instructions to Itself

But we must not move too quickly and propose solutions before clearly formulating the problem and describing alternative options. That would be contrary to the Instructions itself.

What method can we use to define the problem and determine the way forward for the Instructions? Perhaps we can come closer to concrete measures by applying the Instructions to itself through its six questions:

1. What is the problem, and what do we want to achieve?

Strictly speaking, this is two questions in one—but we will return to that. What we want to achieve—and there is likely broad agreement on this—is that the state has good methods and tools that enable the best possible decisions. We want to determine whether the current Instructions is good enough. If not, it must be replaced with something better, as some contributors in Stat & Styring have argued—though not everyone agrees.

2. What measures are relevant?

The zero option is to conclude that the current Instructions is sufficient when properly applied. Another option is to replace it with different questions. This would require knowledge that such changes would lead to better and more targeted measures, and possibly renewed guidance materials.

A third and less radical option is to retain the six questions but improve parts of the toolbox and guidance. If so, two additional measures might be relevant:

Expanding Question 1 with an additional question about what kind of problem is to be solved.

Creating space for testing, experimentation, and adjustment—an “instruction for reconsideration” or a “trial-and-error guide” that lives alongside the Instructions.

3. What principled issues do the measures raise?

Any renewed Instructions must operate within existing legal and governance frameworks. But this raises the question: do current governance principles allow sufficient flexibility to manage complex, cross-sectoral processes requiring course adjustments?

For example, the Financial Management Regulations for the State emphasize both annual and multi-year planning. A better toolbox could strengthen long-term planning, benefit realization, and risk reduction.

4. What are the positive and negative effects of the measures?

A strengthened Instructions would likely improve decision-making overall. However, it is difficult to determine in advance what wording or guidance will yield optimal outcomes—particularly before some experimentation has occurred. A more flexible and differentiated toolbox may be appropriate, but this must be weighed against the advantages of a standardized system used across sectors.

5. Which measures are recommended, and why?

The answers so far suggest possible directions but do not provide a definitive recommendation. This is unsurprising: the Instructions must work across many contexts. We can at best propose further process steps to assess whether a better toolbox is needed.

6. What are the prerequisites for successful implementation?

If changes are well anchored and communicated, implementation should not be overly difficult. But here lies perhaps the most interesting issue: how can we assess prerequisites for success before implementation has begun? Some contexts allow for prediction; others require experimentation and learning. The more complex the actor landscape, the harder it becomes to know in advance. In such cases, the ability to adjust course quickly becomes crucial.

What Could Be Improved?

This exercise demonstrates that some measures require more than answers to six questions. Neither economic, technological, nor legal analyses alone can provide clear answers beforehand.

When facing complex problems, we seek to illuminate the issue, produce knowledge, and assess alternatives as well as possible. There will never be a perfect solution—only continuous improvement. A good approach is often to retain what works while supplementing it with better guidance, evaluations, learning loops, and improved experimentation methods.

Thus, the Instructions works fairly well and should not be discarded—but it does not work equally well in all contexts. It should therefore be supplemented.

We believe at least four improvements are needed:

1. A Framework for Categorizing Problems

Beyond defining the problem and goal, we must ask: what kind of problem is this?

Some problems have established best practices. Others are complicated but solvable through expert analysis (“rocket science”). Still others are complex, where cause and effect are not predictable and must be explored collaboratively across sectors.

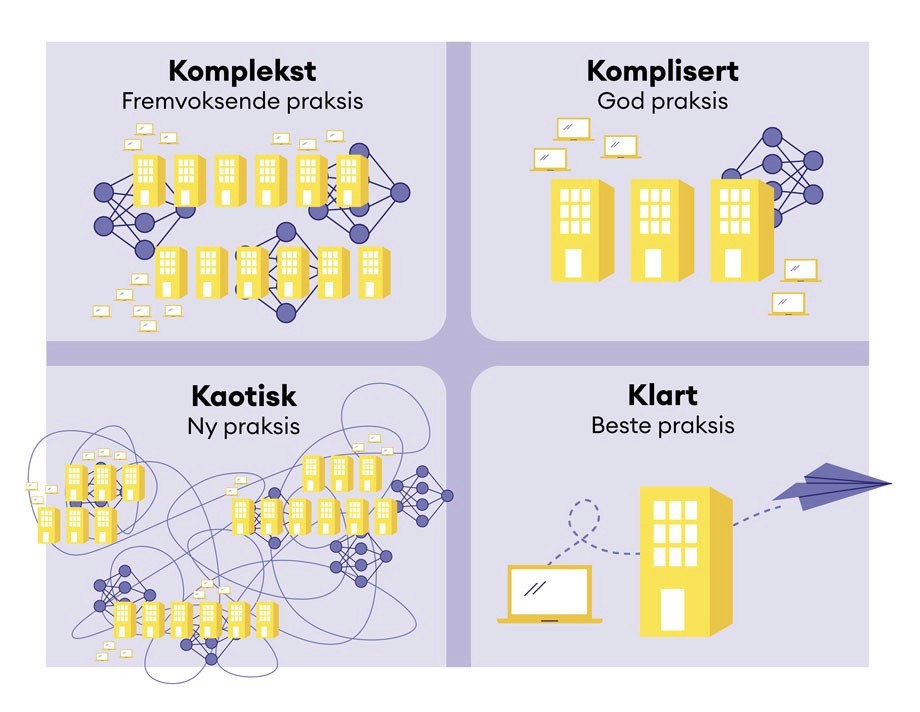

An interesting framework here is David Snowden’s Cynefin framework, described in the 2007 Harvard Business Review article “A Leader’s Framework for Decision Making.” Cynefin distinguishes between simple, complicated, complex, and chaotic problems, each requiring different approaches.

A good toolbox should include guidance on using such a framework to determine how to proceed.

2. A Toolbox for Better Implementation

In complex contexts, the main challenge is often not defining measures but ensuring implementation capacity.

The Norwegian government system is an efficient “decision machine,” with structured cabinet meetings and standardized decision documents. Decisions function well.

Implementation is another matter. There is no equivalent “implementation machine.” Parliamentary attention largely ends after budget decisions are made.

But deciding is not the same as achieving intended results. Cross-sectoral initiatives often struggle due to weak coordination—not insufficient analysis.

What is needed may be less analysis and more action. Guidance for cross-sector collaboration and coherent service development—such as recent publications from DFØ and Digdir—are promising starting points.

3. Better and Faster Ways to Reconsider

An overemphasis on pre-decision analysis can create an “analysis hegemony,” where prestige prevents course correction.

A recent Stimulab project on cross-sector governance introduced “dynamic benefit management,” which allows direction and deliverables to evolve as new insights emerge.

Similarly, Norway’s 2016 Digital Agenda emphasized five principles for reducing risk in digital projects:

Start with user needs

Think big, start small

Choose the right partner

Ensure proper competence and leadership

Deliver frequently and adjust based on feedback

Large IT project failures have shown that course adjustment is essential. Perhaps what is missing is a formalized “adjustment instruction” enabling structured experimentation and learning.

4. A Guide for Systemic Challenges

Together, these additions could form a system innovation toolbox—supporting work in complex systems where full analysis in advance is impossible.

We must avoid believing that better decisions can be “magically” produced through improved instructions alone. Many solutions lie within demanding change processes and leadership.

Innovation in complex systems requires exploration, experimentation, and iteration. The gap between policy-makers and implementers must shrink.

The challenge is therefore larger than rewriting the Instructions. We hope DFØ, Digdir, and their ministries recognize the need for a more complete package of methods, examples, and guidance that complement—and sometimes go beyond—the current Instructions.

Only then can the public administration develop the capability and willingness to make sound choices in a changing society.

Paul Chaffey

Special advisor

paul.chaffey@halogen.no